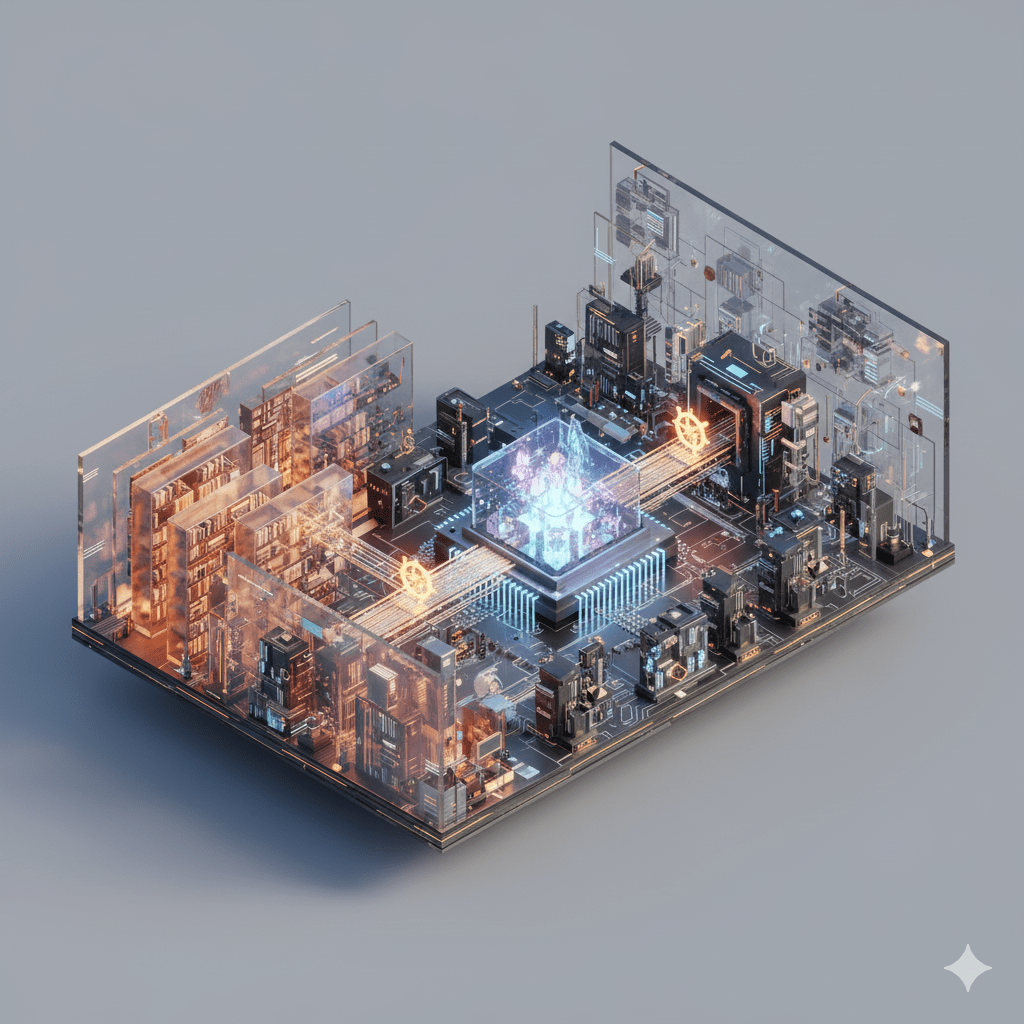

What would it take for an AI system to develop genuine values, rather than having them engineered in after the fact? I’ve been circling this question since my PhD, where I worked on novelty search: an evolutionary algorithm that finds remarkable things precisely by abandoning the objective. Can evolution do the same for alignment?

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.